Member-only story

Building Real-time communication with Apache Spark through Apache Livy

Dockerizing and Consuming an Apache Livy environment

We all know what Apache Spark is, There are two approaches to submit jobs to an Apache Spark cluster programmatically and each of them comes with some limitations in order to achieve a real time interaction, spark-submit and spark-shell are the only options available to submit spark apps to an Apache Spark Cluster, but, what would happen in the cases when you want to submit spark-jobs interactively from a web or mobile application?

There are cases where your Apache Spark cluster could be hosted in an on-premise infrastructure, and you would need many users consuming and running heavy aggregations against your organization’s data sources concurrently, from their mobile phones, web or desktop applications, create a “Spark-as-a-Service” environment to solve what I mentioned above is not as difficult as it sounds, one solution could be to expose your JDBC/ODBC data sources via Spark thrift server, another alternative would be to use Apache Livy.

I don’t know if Apache Livy should now be seen as a Workaround due to Apache Spark’s aggressive foray into the cloud with technologies like Google Cloud Dataproc or AWS EMR, but, this article shows and explains a dockerized environment that you can use as a template to quickly deploy a consumable Apache Livy environment.

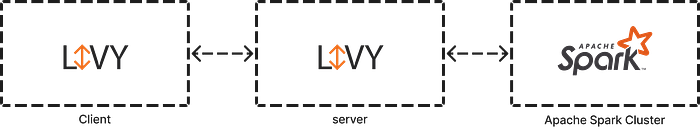

I will try to be brief explaining what Apache Livy is: is a service that enables easy interaction with an Apache Spark cluster over a REST interface, check the image below:

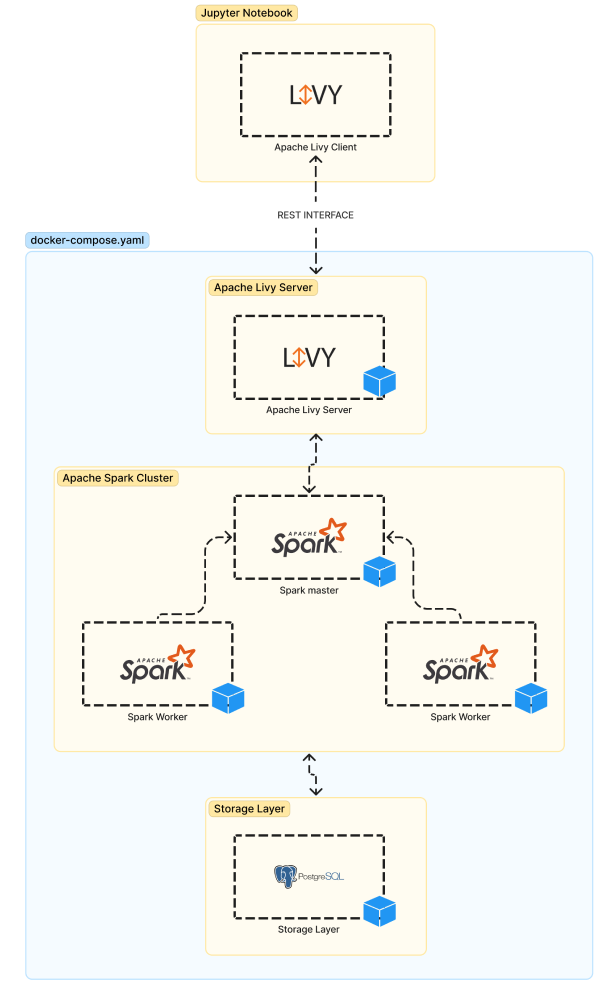

As you can see, In order to reproduce a real example we would need three components:

- Apache Spark Cluster

- Apache Livy Server

- Apache Livy Client

As an additional component I would add docker for a faster implementation, and a PostgreSQL database server to simulate an external data source available for Apache Spark.